Docker Image

Docker image can be described as a template with all required configurations Whereas a container is a running instance of Docker image. Like containers, image are not bound to the states i.e. Image does not have states.

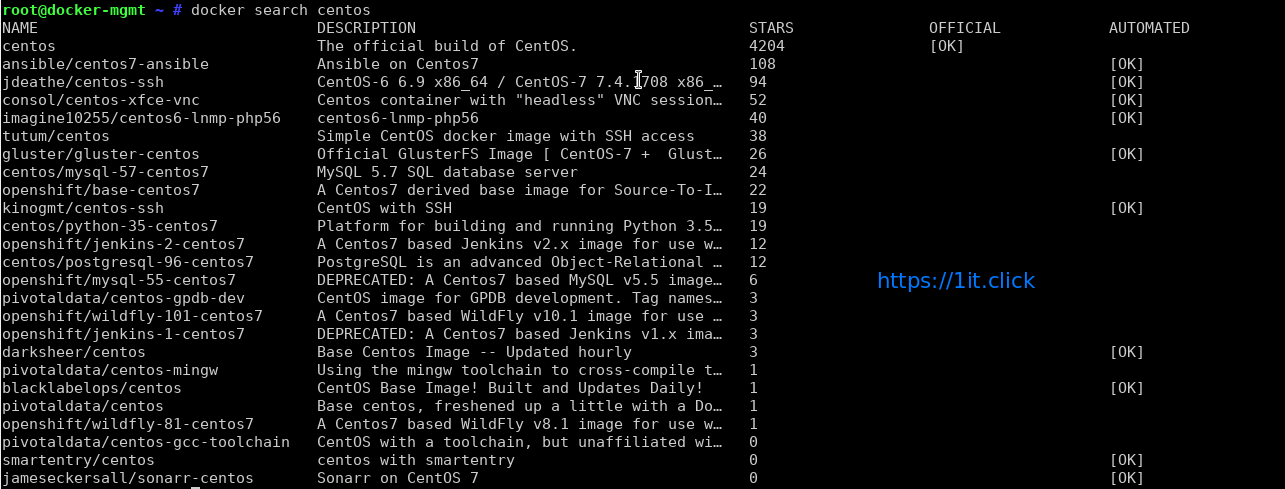

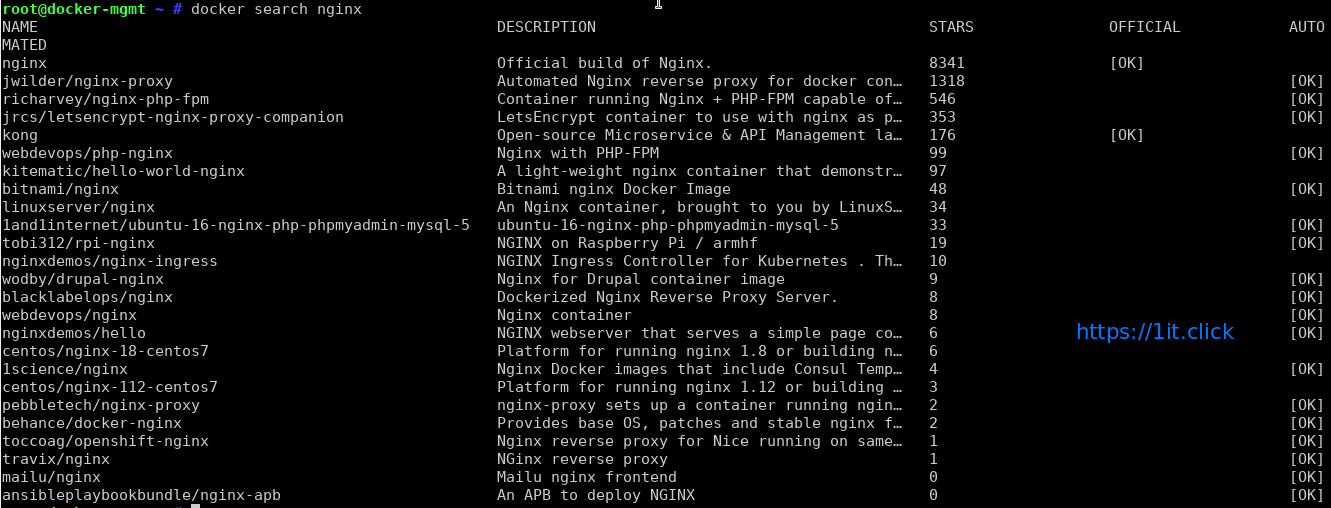

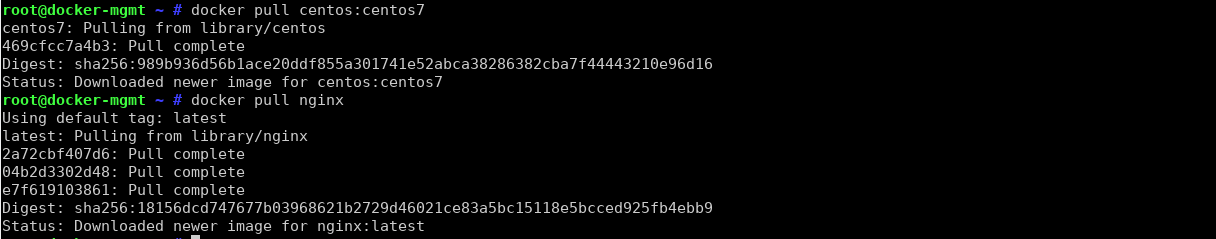

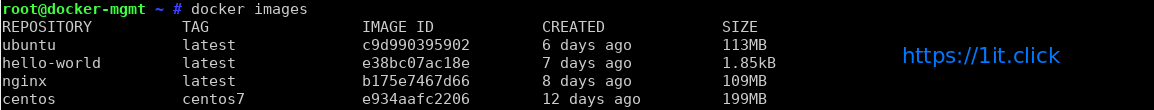

There are different images available from the OS/Application vendor along with the custom images from the community.

When working on container a DevOps/Application engineer generally create their own Docker image with all the customization, this enable them to launch a container quickly.

Methods for custom image creation

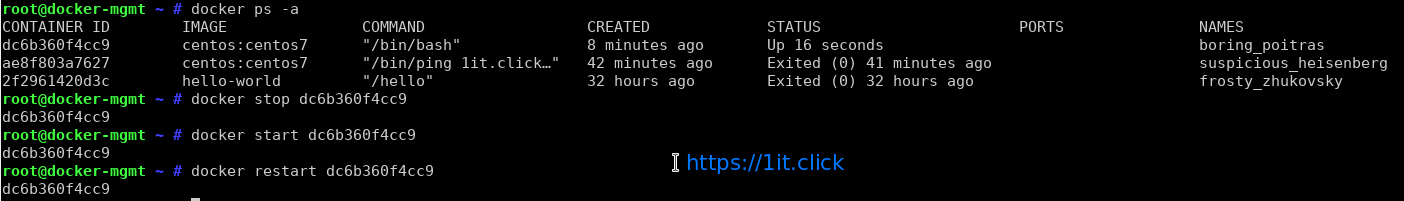

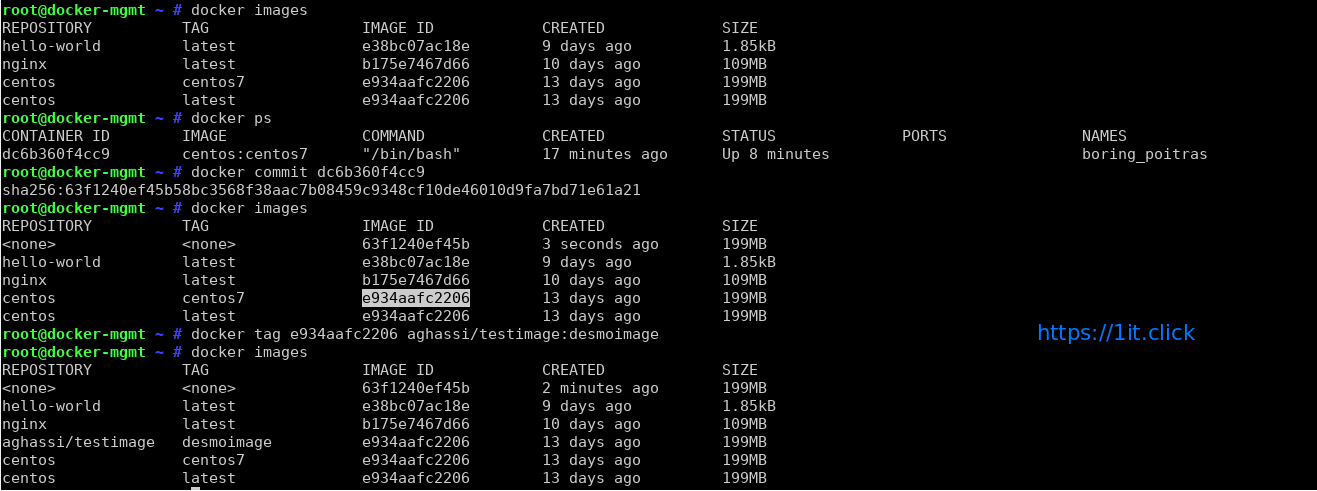

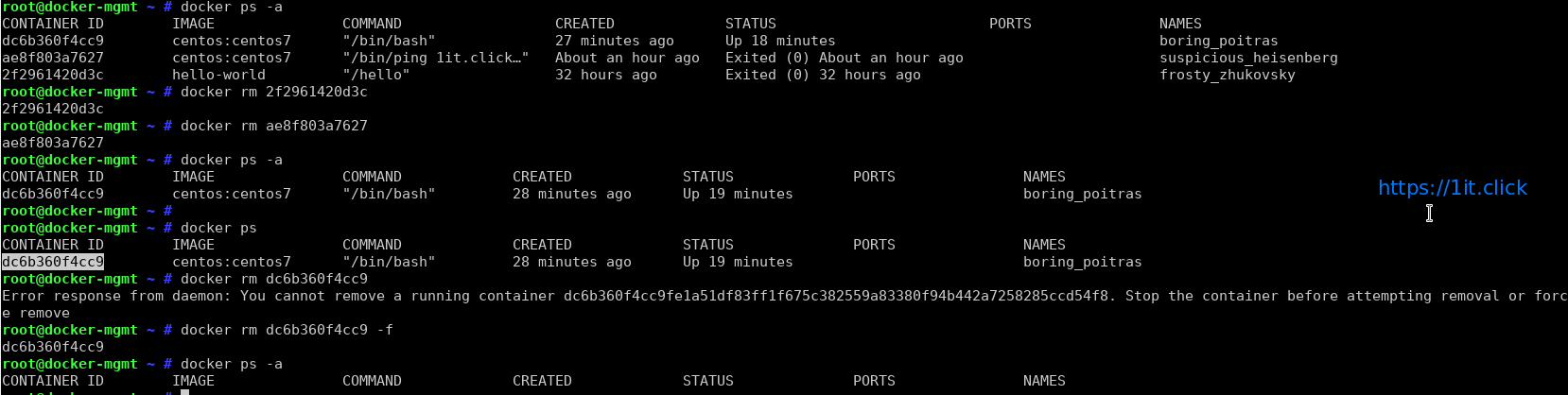

Interactive Method:

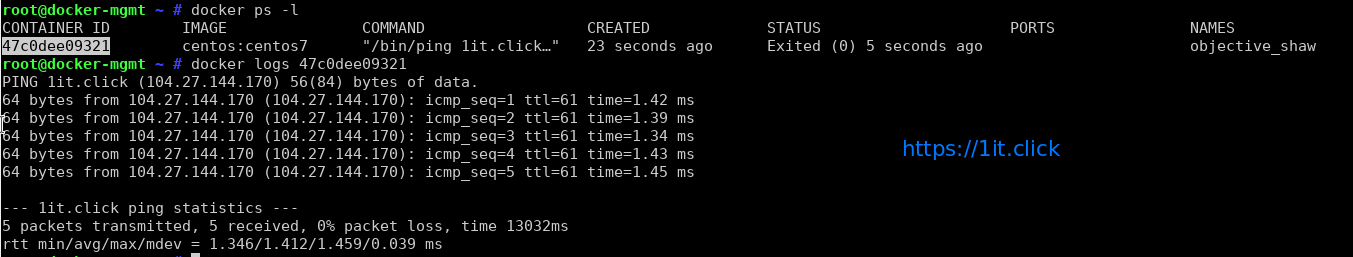

In this way, you can download the base Docker OS image -> create container -> manually launch a shell -> perform the customization -> commit the changes.

This process will save your container to a Docker image and that image can be stored/distributed.

Automated method using Dockerfile:

Dockerfile is text file with the directives/instructions for the image creation. “docker build” command is used to build the image which creates/configures the image automatically by reading Dockerfile. Dockerfile accept the in the following format

DIRECTIVE arguments

In the last session on “Docker”, we have covered the “interactive way” of image creation and in this session we are going to create a Docker image using the Dockerfile method.

LAB

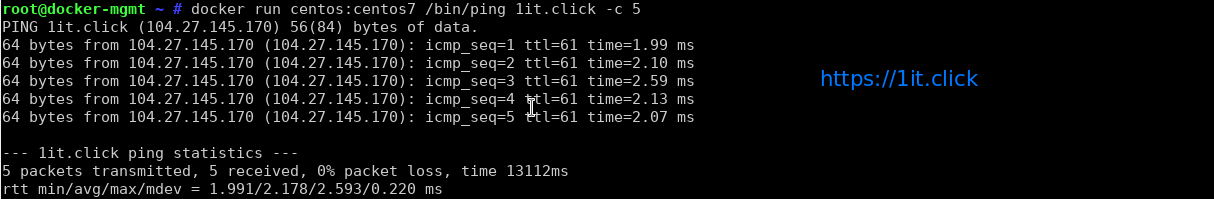

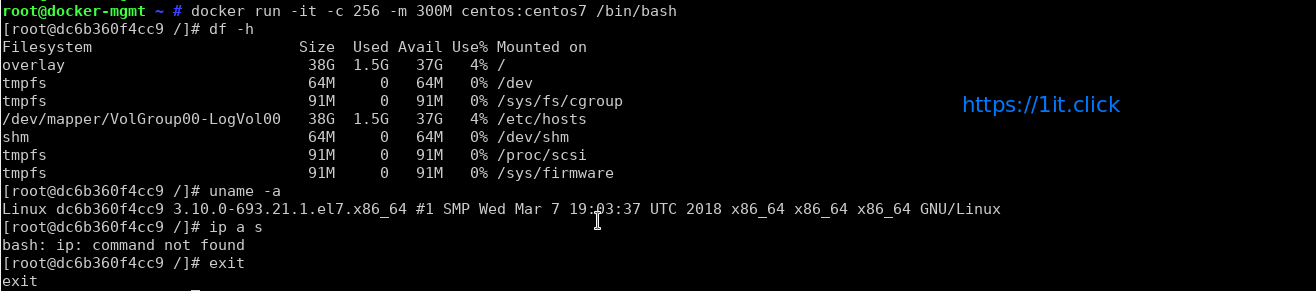

Using the Dockerfile, we are going to create an Apache HTTPD Web server on CentOS 7 image, At high level below configuration will be performed/applied to the Docker image.

- Download the official CentOS 7 image.

- Perform package update on the image.

- Install Apache HTTP server.

- Add a directive to include/copy the index.html from Docker mgmt server to document root ( i.e. /var/www/html ) of the image.

- Enable port 80 for automatically whenever a container created from this image.

- Configure the auto start-up of Apache HTTPD service.

Below are the directives we are going to use in the Dockerfile.

FROM : this directive tells which base image to be used to create the custom image, example centos/ubuntu etc.

RUN : this directive is use to define the command to be executed during the image build.

ADD : this directive is used to defines the files/directories to be copied from the source (local server) to the image during image build.

ENTRYPOINT : this directive defines container as executable.

CMD : this directive is used to define the arguments for the ENTRYPOINT command.

EXPOSE : this directive defines the network ports on which container will listen.

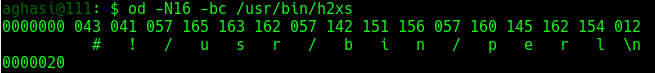

Sample Dockerfile

# use latest centos7 image

FROM centos:latest

# add the image maintainer name and email id

MAINTAINER Aghassi email: [email protected]

# update the centos image with latest available updates

RUN yum update -y

RUN yum clean all

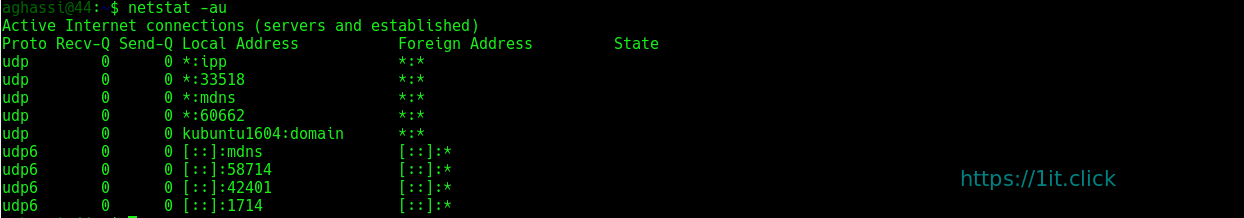

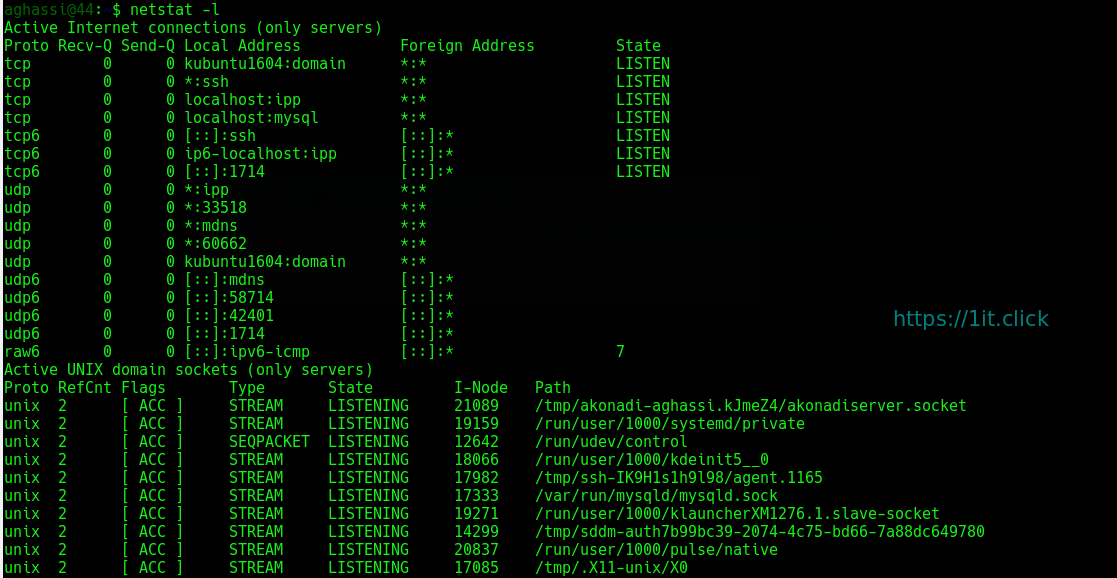

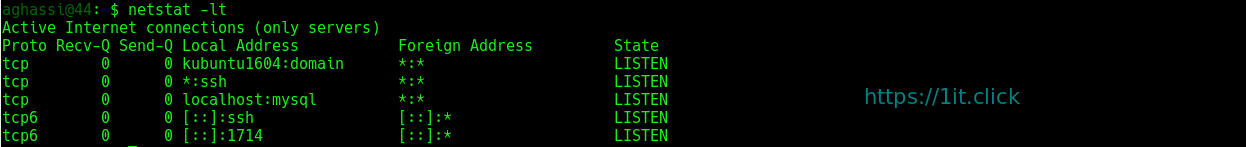

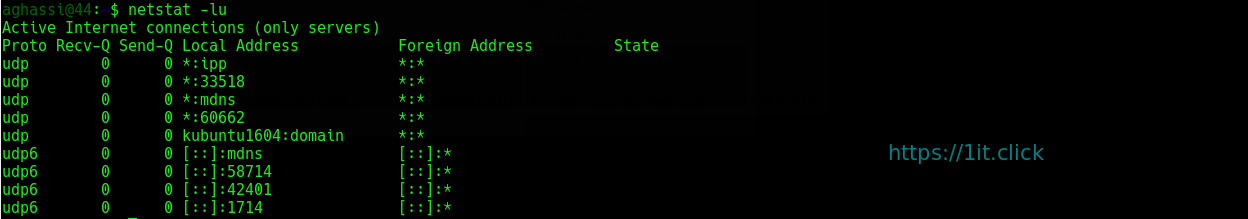

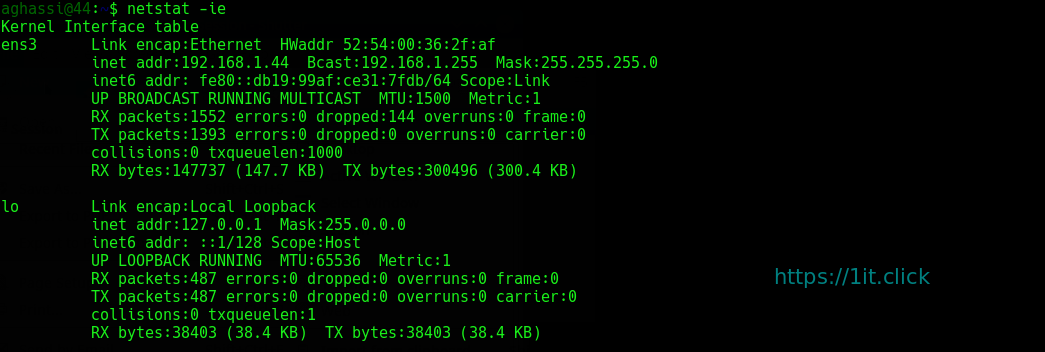

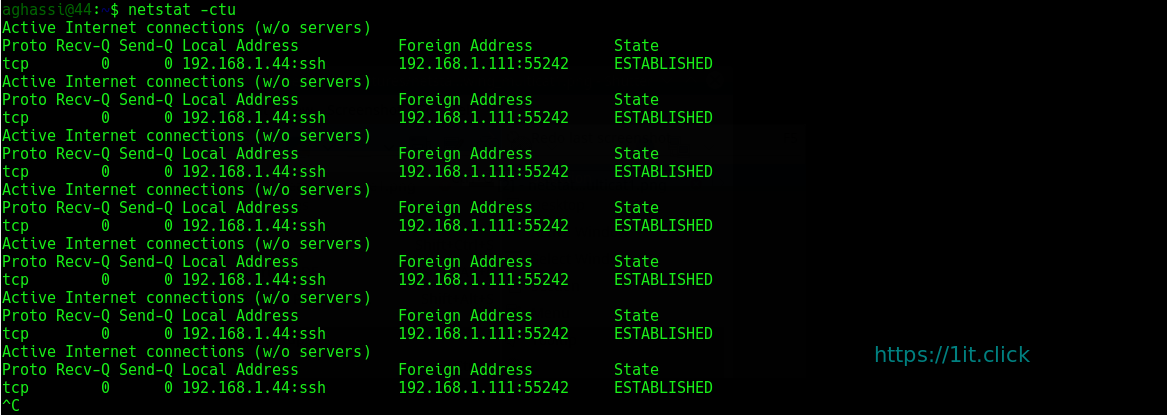

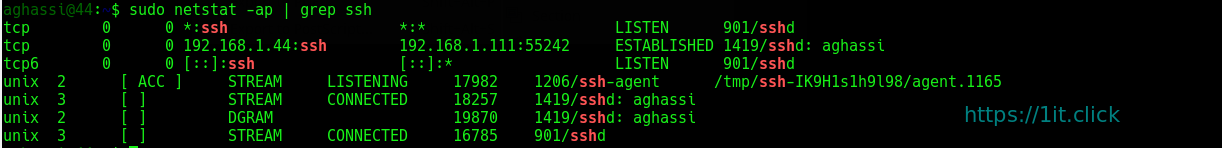

# install network utilities, such as ( ifconfig, netstat, etc)

RUN yum install net-tools -y

# install apache httpd web server

RUN yum install httpd -y

RUN yum clean all

# copy the index.html file from current directory to image's document root

ADD index.html /var/www/html/

# define image to allow listen on port 80 (whenever a container created)

EXPOSE 80

# define the commands to be executed when container boots (created from this image)

ENTRYPOINT [ "/usr/sbin/httpd" ]

CMD [ "-D", "FOREGROUND" ]

Bulid image

# docker build -t [repository/image_name]:[tag] .

Test the newly created image by creating a container

# docker run -it -d -P [image id]

#curl [container IP]:80

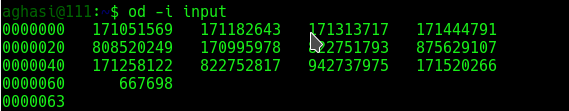

Note: The command example below show another example of

Note: The command example below show another example of

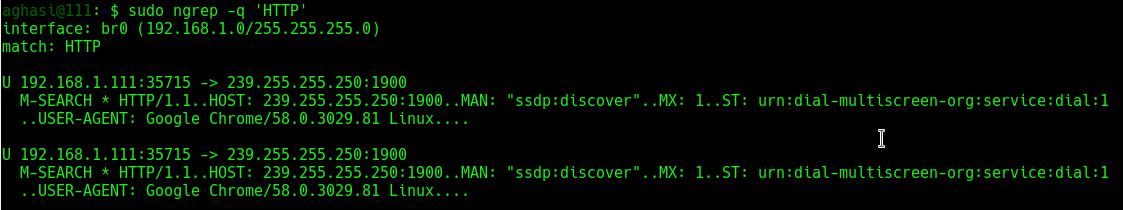

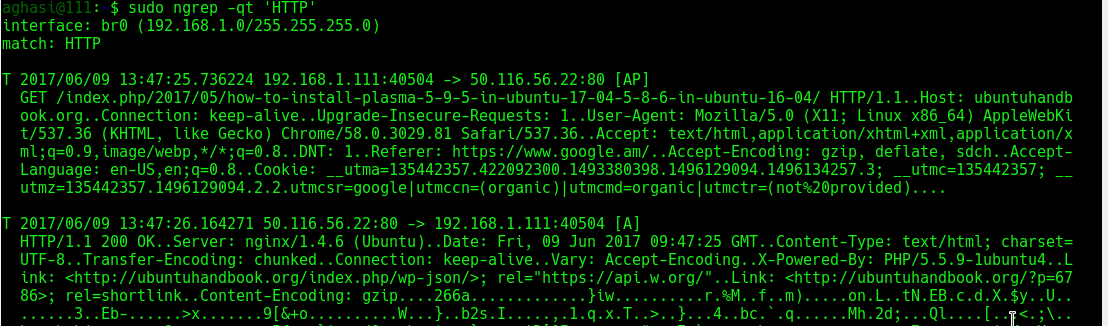

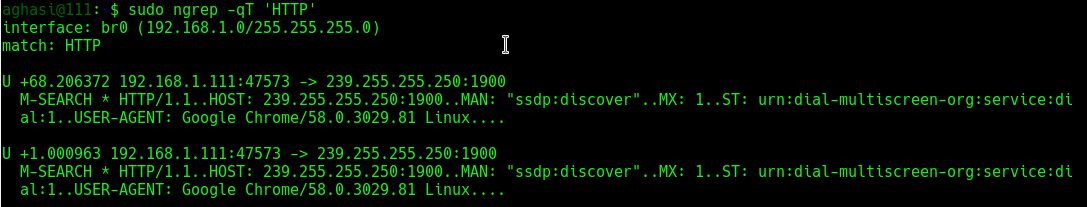

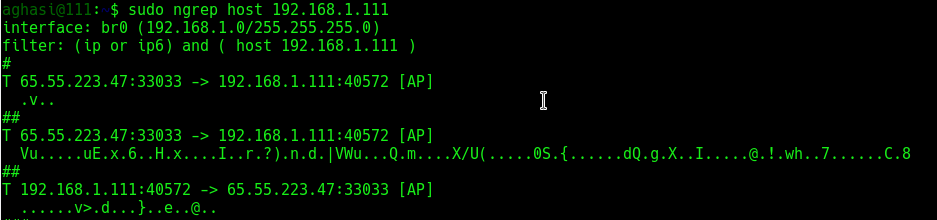

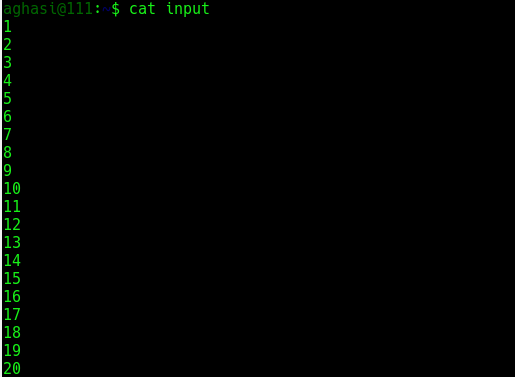

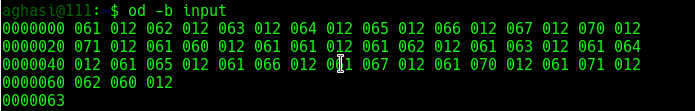

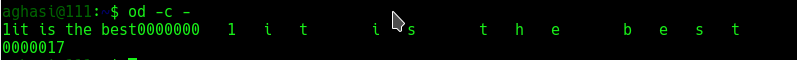

ngrep

ngrep